I came across this fascinating (and horrifying) article by Ellie Hall yesterday on the “women of ISIS,” many of whom are foreigners who have traveled from “Western” countries to join the fighters in Syria. The piece is worth a read, but I was particularly struck by the first paragraph, in which—for the first time, I believe—a Facebook spokesperson states outright that it’s company policy to remove terrorist organizations.

It isn’t actually news to me that this is happening across social networks. Twitter has grappled with the issue, from dealing with calls from the State Department to, more recently, attempting to shut down ISIS accounts (most of which pop right back up again like in a game of whack-a-mole). Twitter is fairly inconsistent in their practice and opaque in their policy, which bans threats of violence, but not terrorist organizations.

Now, if you clicked through the links above, you’ll know that my opinion is that these companies are incapable of regulating content consistently and that, in any case, there are good reasons to allow terrorist groups to remain on these networks (take, for example, Hezbollah, a US-designated terrorist organization that is also a legitimate political party in its home country of Lebanon). That’s a separate point for a separate conversation, however. What I want to discuss today is, specifically, how Facebook regulates this content.

Let’s start with the rules. Facebook’s Terms of Service, written in legalese, do not mention the word “terror” in any of its iterations. Its Principles, which provide for “the freedom to connect online with anyone” and to share whatever information they desire, also don’t mention it. The Community Standards, which are meant to guide users in non-legal terms as to what they can and cannot do on the site, do mention “terror” in their section on “violence and threats,” which reads [bold is mine]:

Safety is Facebook’s top priority. We remove content and may escalate to law enforcement when we perceive a genuine risk of physical harm, or a direct threat to public safety. You may not credibly threaten others, or organize acts of real-world violence. Organizations with a record of terrorist or violent criminal activity are not allowed to maintain a presence on our site. We also prohibit promoting, planning or celebrating any of your actions if they have, or could, result in financial harm to others, including theft and vandalism.

“Terrorist or violent criminal activity.” Seems clear enough, and yet, no statement on what governs this principle. If such a rule were governed by law, it would perhaps be limited to US-designated terror organizations. If it was inclusive, surely it would include groups designated as “terrorist” by governments other than the US. And if it was truly inclusive, it would also include state violence (given that the aforementioned Principles specifically refer to the equality of users, one would truly hope so).

And so I did a little experiment. The first thing I noticed was quite interesting. A few years back, Facebook designated official “Pages” that pulled information from Wikipedia’s API. Take, for example, my own name – when you search for it, you find three results: My personal, friends-only profile; a “fan page” set up years ago by a friend, and my “official” Page with information gleaned from Wikipedia.

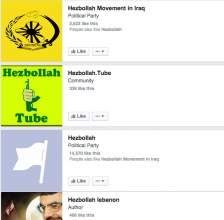

I decided to do a few searches for organizations that fit the above criteria. Starting with Hezbollah, I found a number of results (left). The first, “Hezbollah Movement in Iraq,” is an official pull from Wikipedia. The second, “Hezbollah Tube,” is a “community” page for what I can only imagine is a YouTube channel. The third, the “official” page for Hezbollah, should pull from Wikipedia, but as you can see from the lack of image, it’s oddly blank. Click through, and you’ll find that the entire page is blank. You can tell it’s meant to pull from Wikipedia by the note at the bottom, and the page still allows “likes,” but for some reason Facebook has killed the page’s content.

And so I did a few more searches, to see if the same applied to other groups. “Islamic State of Iraq and Levant”‘s “official” page was (very troublingly) labeled a “country” but the content was empty. The page of Islamic Jihad Union, a lesser-known Uzbek US-designated FTO, was also empty. Al-Shabaab‘s page, however was full, as was that of Al-Manar (both designated FTOs). The Jewish Defense League’s English page was blank, but its French one was alive and well. Interestingly, the official page for the Westboro Baptist Church (not a designated terrorist group, but certainly prone to hateful speech) also came up blank.

Of course, there are always numerous “fan” or “community” pages for designated terrorist groups. It’s unclear whether Facebook is proactively removing them, but they are undoubtedly being reported and taken down.

What fascinates me about this is that, while Facebook is willing to remove the Wikipedia content (undoubtedly neutral information) for these pages, it was reluctant some months ago to remove a page calling for the death of Palestinians. Similarly, state organizations that promote violence (such as the Israel Defense Forces) are not only allowed to say whatever they please but are glorified with a “verified” button. Now, of course this comes as no surprise to me, except for the fact that such “official” groups (with or without the verified button) aren’t held to the same standards as other users, despite Facebook’s “principles.”

Remember how social networks removed the accounts of users who re-posted the video of James Foley’s horrific beheading? The same standard wasn’t applied to the New York Post or the Israeli consulate of Ireland, both of which also reposted the content on Twitter. In fact, the Israel in Ireland Facebook account contains all sorts of racist content and content glorifying Israel’s recent attack on Gaza. But search for Hamas, and you can’t even read content from Wikipedia to understand who they are and come to your own conclusions.

This is an issue of neutrality and of consistency. If Facebook’s principles serve to treat users equally and to prevent the spread of violent content, then users should be able to seek information on terrorist groups; at the very least, neutral information from Wikipedia should remain available. And on the flipside, state organizations shouldn’t be able to glorify violence on their pages either. By treating these groups different, Facebook is essentially meddling in global affairs. The US might see Hezbollah as a terrorist organization, but Lebanese view it as a political party (and given that Samir Geagea might soon become President, the effect Facebook’s censorship of one side could have is exceptionally troubling). If Facebook seeks to enable political participation (which it surely does based on its actions), then it must either step away from US centrism in its rules* or apply them consistently across the board.

*Someone will inevitably cry “but material support laws!” to which I point out that there is not yet any case law related to whether or not hosting content from such a group constitutes a violation of 18 U.S. Code § 2339A or 18 U.S. Code § 2339B (laws that have been incredibly problematic in their application) and as such, I propose that a content host should test the waters.

2 replies on “Let’s talk about terrorism and Facebook”

Interesting article Jillian.

Facebook, like many social network sites, stands on the feeding opinions of public or internet freaks about content-regulation missions basically. The first step is to allow poeple to mark certain contents or peopel for eligible reasons that you might check while processing a claim. This did not determine who’s fans are allowed to do so, and who are not. We all know that information on online searh machines are best found in their original langugaes, which makes me assume 2 things about your findings, the first one is that if you search hesbullah and other arabian terrorist groups in arabic you shall see more content and conversations, I.e information. The second thing is that, terrorist-classified groups fans have not the knowledge, time nor the will to drive internet attacks on opposing pages like the Israeli ones, or even local governmental ones.

Hey Jillian, Great insights. Thanks for bringing this up. I am just returning from a conference in HK where I had the privilege of listening to speakers like Jeff Moss, and Brad Templeton, all on harnessing the digital dragon and the future of the internet. I am really getting into the subject now and I love your writing style.